Every company worth their salt is vying to get ahead in the AI wars. But there’s no doubt that the two companies leading the charge in creating technologies that aim to change the daily interactions of the average user with AI are OpenAI and Google. Yes, after a bumpy start, Google has more than caught up with its Gemini model. Now, both companies showcased something similar at their Spring events that’s taking us closer to the “Her” moment of AI.

OpenAI’s GPT-4o and Google’s Project Astra both aim to create the ultimate AI assistants of the future. Both are natively multimodal models that show amazing voice and video capabilities.

Given the similarities in the two products, it’s not a far-fetched idea that you’d want a comparison. Since neither of the products is generally available at the moment, there can be no hands-on comparison yet. So, let’s dive in.

Capabilities

Now, if you haven’t seen the demos for the two products, both show some amazing capabilities. Both Project Astra and GPT-4o Voice Mode are capable of holding a continuous, natural-sounding two-way conversation with the user and you can interrupt the AI bot in the middle, just like you can another person.

Both also have vision capabilities where they can see you and the world around you using the device’s camera and interpret everything they see. When it comes to their vision capabilities, both seem to be faring quite neck-to-neck, adept at accurately interpreting what they see. Note that they cannot only describe what they see but also contextually interpret and understand it.

For example, they can judge where you are by seeing your surroundings, or interpret Hamlet from the drawing of a skull and a hand, etc. They can help with maths problems, interview prep, etc. GPT-4o can even translate languages in real time.

However, when it comes to their voice capabilities, GPT-4o seems to be the clear winner at the moment. It can simulate different voices and fluctuations at a single request, like breaking into a song, bringing more drama to the voice, or making it sound all robotic. All in all, it can make you forget for a while that you aren’t talking to a human.

Apart from that, GPT-40 is also capable of understanding emotions and context from the user’s voice and facial expressions. Whether Project Astra has any similar capabilities cannot be said as Google showed no such demonstration.

Under the Hood

Under the hood, Google’s Project Astra has one major advantage. It is powered by the Gemini Pro 1.5 model, which has a long context window of 1 million tokens. And with Google already testing a 2 million context window for Gemini, Project Astra has an edge.

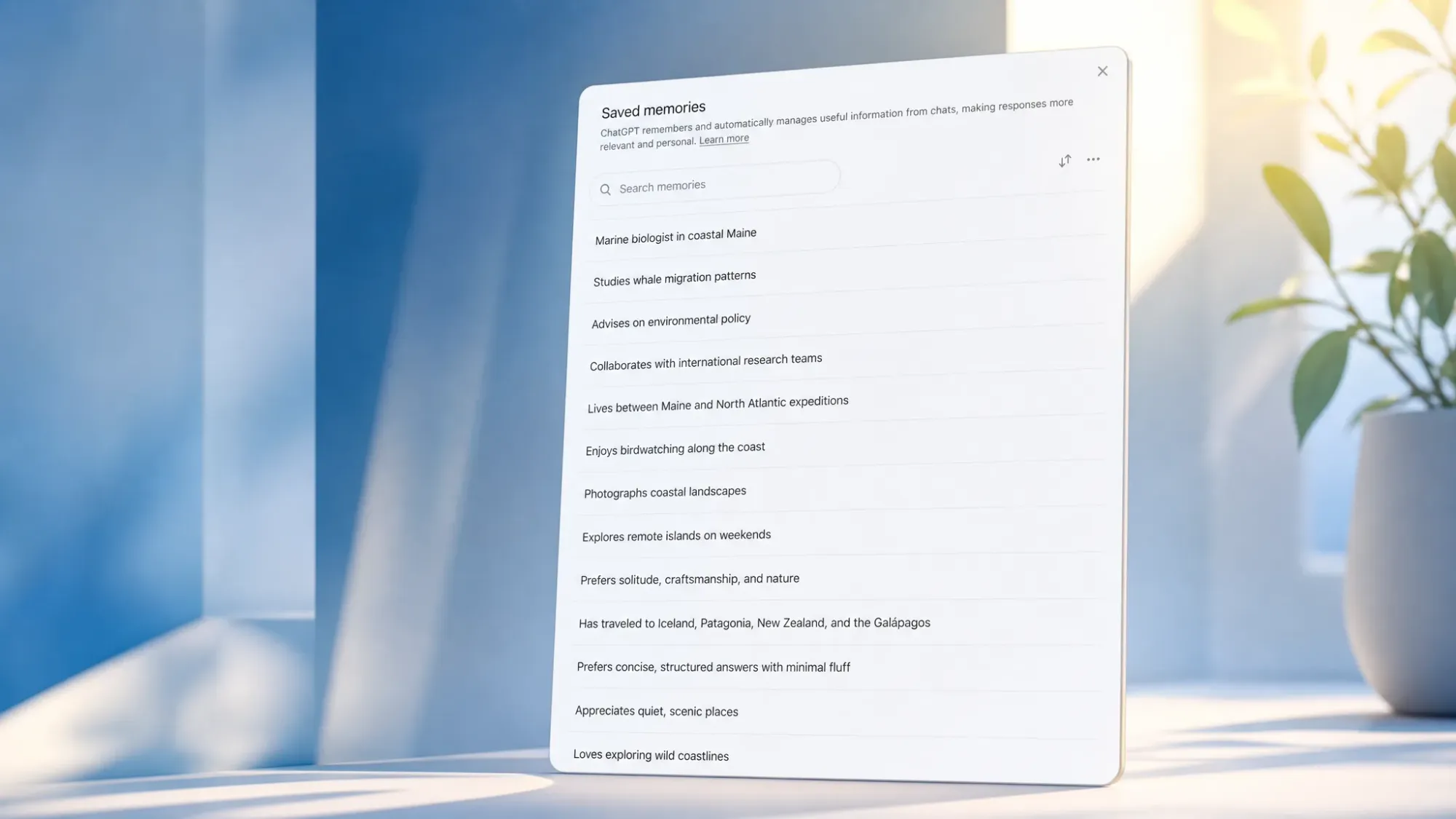

It is probably because of this long context window that Project Astra can cache information it saw and recall it later.

GPT-4o, on the other hand, has a 128K context window, which makes such tasks, like recalling from memory, difficult for it.

There are also other differences both models share under the hood. GPT-4o has native multimodality for both input and output. But Google’s Gemini is still depending on other models, like Imagen-3 and Veo, for video output.

Practical Use

If we were to evaluate GPT-4o and Project Astra solely on the basis of their practical use right now, GPT-4o would emerge as a clear winner again. While neither model is available currently, OpenAI’s GPT-4o will soon be available to users in the coming months, which puts it quite ahead in the timeline.

OpenAI’s GPT-4o will be available to the end user through the new Voice Mode in the ChatGPT app to ChatGPT Plus subscribers. The voice and vision capabilities of GPT-4o will also be available in the ChatGPT macOS app pretty soon. While a Windows app is still away, some Windows users will have access to GPT-4o’s voice and vision capabilities through Copilot.

Project Astra, on the other hand, is still in the prototype stage. An earlier implementation of Project Astra, Gemini Live, will be available in the Gemini Android and iOS apps for Gemini Advanced subscribers, though, in the coming months. However, at release, it will only have voice capabilities and no video capabilities. Video capabilities will arrive by the end of this year. Also, Google has made no announcements on whether the Live experience will be available on Gemini for desktops.

While GPT-4o is undoubtedly ahead of Project Astra currently, Gemini Live does have an edge in that it will be used widely when it is released because Gemini has replaced Google Assistant on Android. However, if there is any weight to the rumors of an OpenAI and Apple partnership, OpenAI’s GPT-4o could soon be powering an assistant on iPhones which would give it even wider ubiquity.