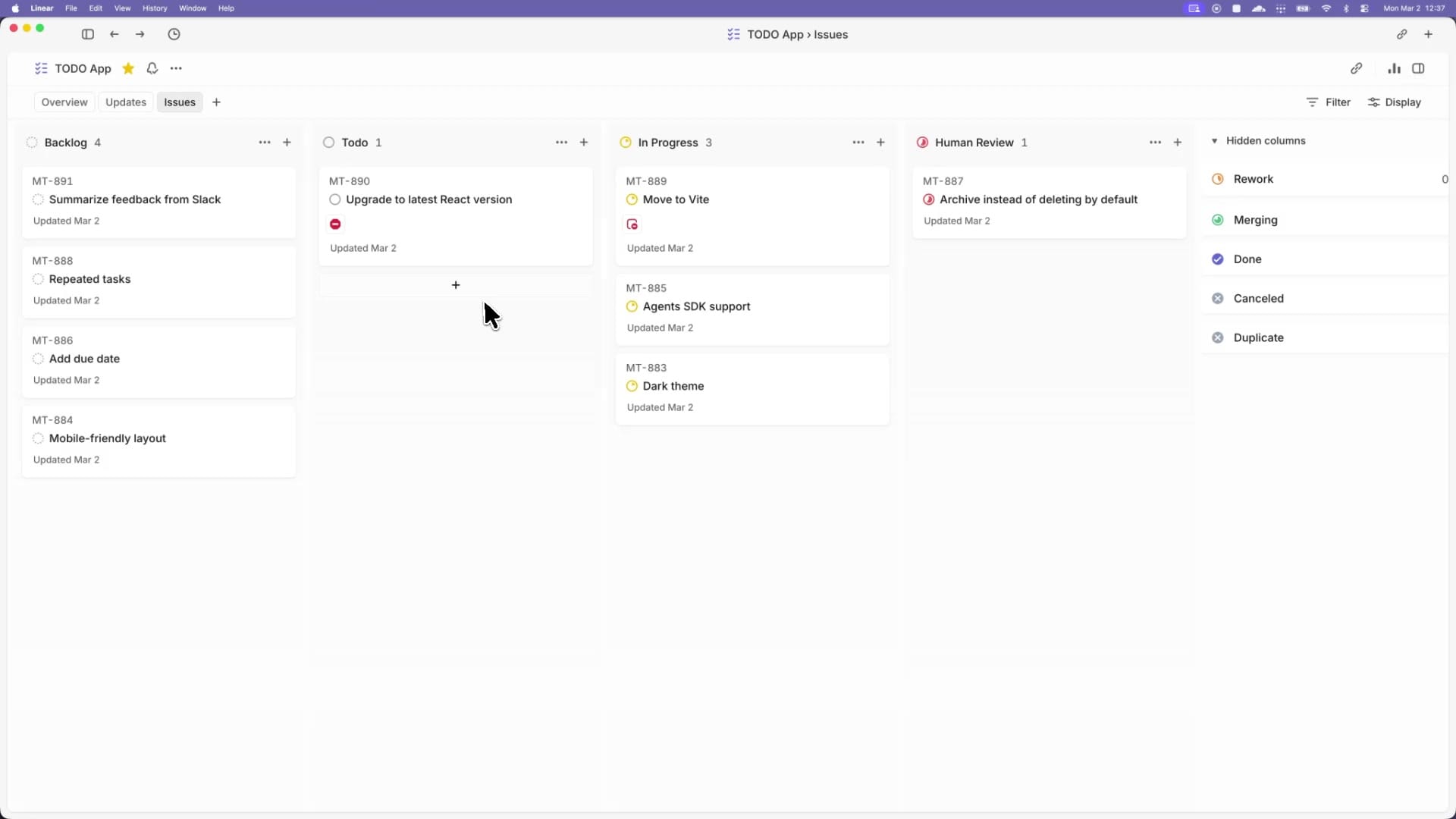

Symphony is an open-source orchestration spec from OpenAI that wires Codex agents directly into an issue tracker like Linear, so every open ticket gets its own agent, its own workspace, and its own continuous run loop. Instead of juggling Codex sessions in browser tabs and terminals, engineers manage the work itself, and the orchestrator keeps agents alive, retries failures, and watches tracker state until tickets land.

Quick answer: Symphony is a long-running daemon that polls a project board, spawns one Codex agent per active issue inside an isolated workspace, and lets the agent drive the ticket through to a handoff state such as Human Review or Done. The full spec lives in a single SPEC.md file, with an Elixir reference implementation in the same repo.

What Symphony actually is

Symphony is not a product OpenAI plans to maintain. It’s a written specification, language-agnostic, that describes how to build a scheduler that reads tickets from Linear, creates a per-issue workspace on disk, and runs a Codex coding-agent session inside that workspace. The reference implementation is written in Elixir for its concurrency primitives, but the team also had Codex re-implement the spec in TypeScript, Go, Rust, Java, and Python to flush out ambiguities.

The core promise is one line: for every open task, guarantee that an agent is running in its own workspace. Everything else, including ticket writes, PR creation, CI shepherding, and review-packet generation, is handled by the agent itself using tools defined in a repo-owned WORKFLOW.md file.

The problem it solves

At scale, interactive coding agents hit a human-attention ceiling. Engineers running three to five Codex sessions in parallel start losing track of which session is doing what, jumping between terminals to nudge stalled agents, and forgetting context. The agents are fast; the humans become the bottleneck.

Symphony decouples work from sessions and from pull requests. A single ticket might produce multiple PRs across repos, or none at all if the task is pure investigation. The orchestrator watches ticket statuses as a state machine and ensures each active issue always has a worker attached. If an agent crashes or stalls, it gets restarted. If a new ticket appears, an agent picks it up automatically.

Inside OpenAI, some teams reported a 500% increase in landed pull requests during the first three weeks of using Symphony, with engineers shifting from supervising Codex to filing speculative tickets and reviewing finished work.

How the architecture is layered

The spec defines six logical layers that any conforming implementation should keep separable:

| Layer | Responsibility |

|---|---|

| Policy | The WORKFLOW.md prompt and team-specific rules for ticket handling and handoff |

| Configuration | Typed getters that parse YAML front matter, apply defaults, expand env vars and paths |

| Coordination | The orchestrator: poll loop, eligibility, concurrency, retries, reconciliation |

| Execution | Per-issue workspace lifecycle and the coding-agent subprocess |

| Integration | The Linear adapter (or any tracker that produces the normalized issue model) |

| Observability | Structured logs and an optional runtime status surface |

The orchestrator is the only component that mutates scheduling state. Workers report outcomes back to it, and those outcomes become explicit state transitions. Restart recovery is tracker-driven and filesystem-driven, so no durable database is required.

The WORKFLOW.md contract

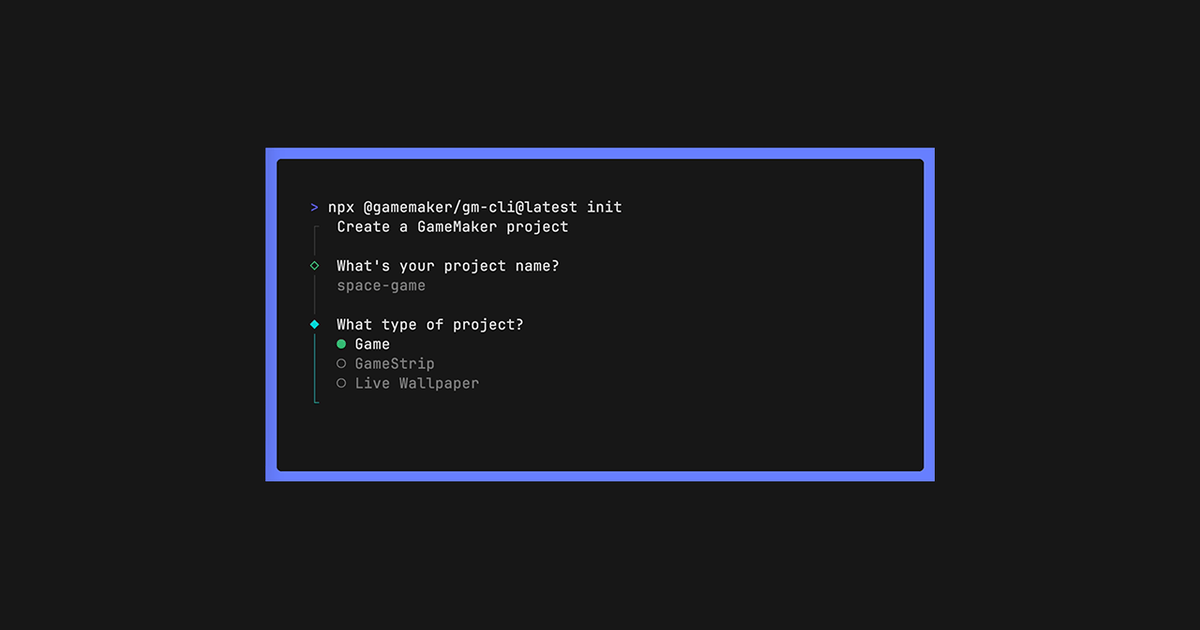

Each repo using Symphony ships a WORKFLOW.md file that combines optional YAML front matter with a Markdown prompt body. The front matter holds runtime settings; the body becomes the per-issue prompt template, rendered with strict variable checking. Unknown variables or filters fail the render rather than passing silently.

Top-level front matter keys include tracker, polling, workspace, hooks, agent, and codex. The file is meant to be self-contained and version-controlled, so the workflow policy travels with the code. Symphony also watches the file and reloads config dynamically without a restart, applying changes to future ticks, retries, and agent launches.

| Key | Default | Purpose |

|---|---|---|

| tracker.kind | linear | Currently the only supported tracker |

| tracker.endpoint | Linear GraphQL endpoint | |

| tracker.api_key | $LINEAR_API_KEY | Auth token, literal or env-resolved |

| tracker.active_states | Todo, In Progress | States eligible for dispatch |

| tracker.terminal_states | Closed, Cancelled, Canceled, Duplicate, Done | States that release claims |

| polling.interval_ms | 30000 | Poll cadence (30s) |

| agent.max_concurrent_agents | 10 | Global concurrency cap |

| agent.max_turns | 20 | Max coding-agent turns per worker run |

| agent.max_retry_backoff_ms | 300000 | Cap on exponential backoff (5 min) |

| codex.command | codex app-server | Subprocess to launch per workspace |

| codex.turn_timeout_ms | 3600000 | Total turn stream timeout (1 hr) |

| codex.stall_timeout_ms | 300000 | Inactivity timeout that triggers a retry |

The poll loop and dispatch logic

At startup, Symphony validates configuration, runs a terminal-workspace cleanup pass, and schedules an immediate tick. After that it polls every polling.interval_ms. Each tick follows a fixed sequence:

stall_timeout_ms; tracker state is refreshed for every running issue, and workers are terminated when their tickets go terminal.tracker.kind must be supported, the API key must resolve, and codex.command must be non-empty. Failure skips dispatch for the tick but keeps reconciliation active.The orchestration state machine

Symphony tracks an internal claim state per issue, separate from the tracker’s own statuses:

| Internal state | Meaning |

|---|---|

| Unclaimed | No worker, no retry scheduled |

| Claimed | Reserved to prevent duplicate dispatch |

| Running | Worker task active, tracked in the running map |

| RetryQueued | Retry timer set in retry_attempts |

| Released | Claim removed; issue is terminal, missing, or no longer active |

A clean worker exit doesn’t end the ticket. After each successful turn, the worker re-checks tracker state and, if the issue is still active, starts another turn on the same Codex thread (up to agent.max_turns). Continuation turns send only continuation guidance, not the original prompt. After the worker exits normally, Symphony schedules a short ~1 second continuation retry to verify whether more work is needed. Failure-driven retries use exponential backoff: min(10000 * 2^(attempt - 1), max_retry_backoff_ms).

Workspace safety invariants

Workspaces live under workspace.root, which defaults to <system-temp>/symphony_workspaces. Each issue gets a directory named from its sanitized identifier, where any character outside [A-Za-z0-9._-] is replaced with an underscore. Workspaces persist across runs and are not auto-deleted on success.

The spec defines three hard invariants for any implementation:

- The Codex subprocess must launch with

cwd == workspace_path, never the parent directory or the orchestrator’s working dir. - The workspace path, normalized to absolute, must sit inside the workspace root. Anything outside is rejected.

- The workspace key must be sanitized to the allowed character set before it touches the filesystem.

Four optional shell hooks plug into the lifecycle: after_create, before_run, after_run, and before_remove. The first two are fatal on failure; the last two log and continue. Hook timeout defaults to 60 seconds.

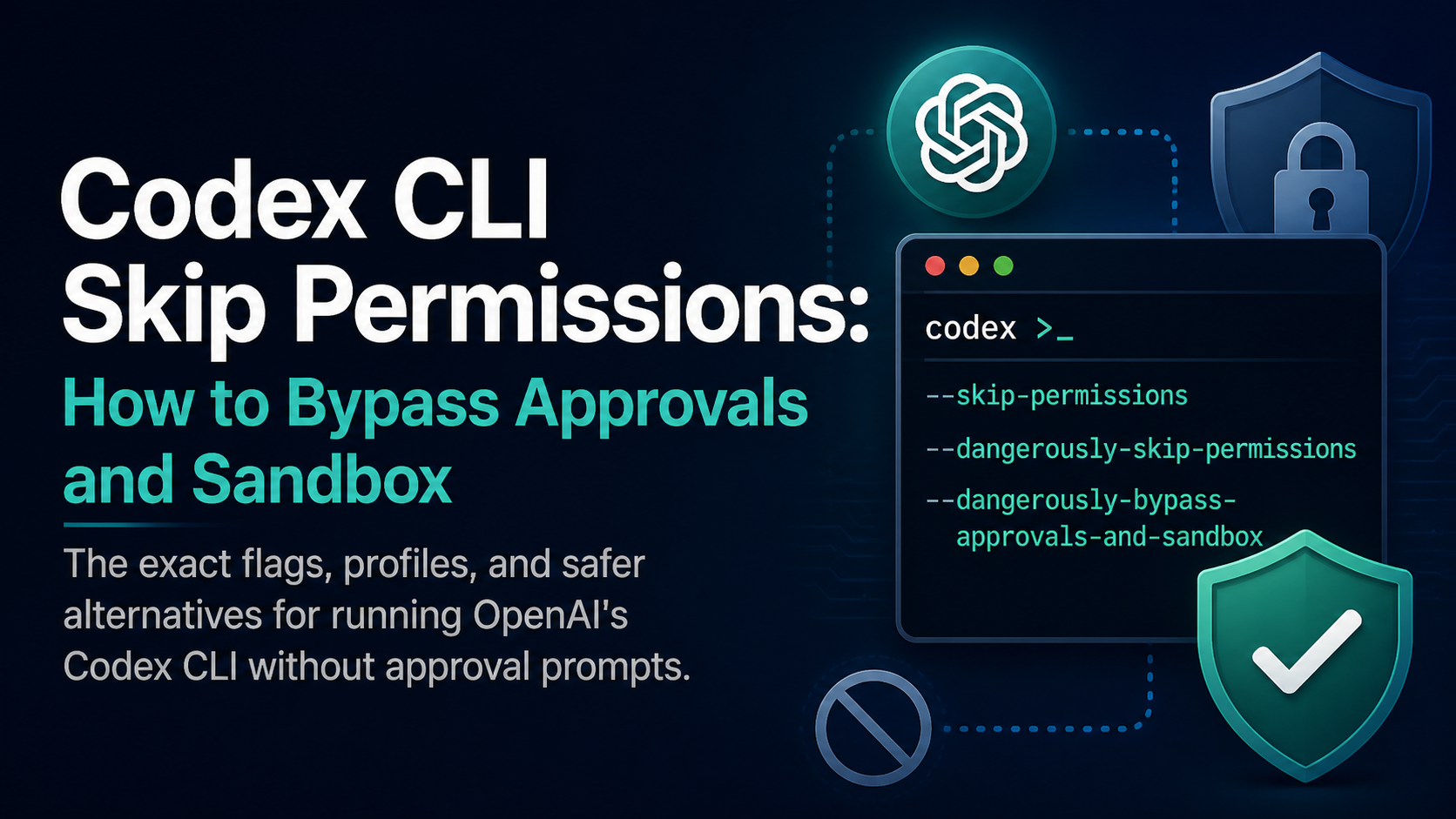

Talking to Codex through the app server

Symphony drives Codex via the Codex App Server, a headless mode that exposes a JSON-RPC-like protocol over stdio. Each worker launches codex app-server via bash -lc in the workspace directory and exchanges line-delimited messages.

The startup handshake is fixed: initialize request, initialized notification, thread/start, then turn/start with the rendered prompt. The session ID is composed from the returned thread and turn IDs as <thread_id>-<turn_id>. Stdout carries the protocol; stderr is treated as diagnostics only.

{"id":1,"method":"initialize","params":{"clientInfo":{"name":"symphony","version":"1.0"},"capabilities":{}}}

{"method":"initialized","params":{}}

{"id":2,"method":"thread/start","params":{"approvalPolicy":"","sandbox":"","cwd":"/abs/workspace"}}

{"id":3,"method":"turn/start","params":{"threadId":"","input":[{"type":"text","text":""}],"cwd":"/abs/workspace","title":"ABC-123: Example"}}

Illustrative startup transcript

A turn ends on turn/completed, turn/failed, turn/cancelled, the turn timeout, or subprocess exit. If the agent requests user input, the run fails immediately rather than stalling.

The linear_graphql tool extension

Rather than handing every subagent a Linear access token, Symphony can expose a single client-side dynamic tool, linear_graphql, that executes one GraphQL operation per call against the configured Linear endpoint. The agent supplies a query and optional variables; Symphony performs the request using its own auth and returns the response as structured tool output.

This avoids both Linear MCP and direct token exposure to containers. Multi-operation documents are rejected as invalid input. Transport success with no top-level errors returns success=true; GraphQL errors return success=false with the response body preserved for debugging.

Tracker integration boundary

Symphony itself is a scheduler, runner, and tracker reader. It does not write back to Linear. State transitions, comments, and PR links are all performed by the coding agent through tools defined in the workflow prompt. A successful run can end at any workflow-defined handoff state, such as Human Review, rather than forcing tickets to Done.

The Linear adapter must implement three operations: fetch candidate issues in active states, fetch issues in terminal states for startup cleanup, and fetch current states for a list of issue IDs for reconciliation. Pagination defaults to 50 per page with a 30 second network timeout. Normalized issue records expose a stable shape (id, identifier, title, description, priority, state, branch_name, url, labels, blocked_by, created_at, updated_at) regardless of the underlying tracker.

Running it yourself

There are two paths laid out in the Symphony repository:

Option 1: Point your own coding agent at SPEC.md and ask it to implement Symphony in whatever language fits your stack. Codex built working versions in Elixir, TypeScript, Go, Rust, Java, and Python from the same spec.

Option 2: Use the experimental Elixir reference implementation under elixir/. The repo includes a README with environment setup instructions, and you can hand that file to a coding agent to scaffold the integration into your repo.

Either way, the prerequisite is a codebase that has already invested in agent-friendly structure: automated tests, clear guardrails, and the kind of harness work that lets Codex operate without constant human steering. Symphony is the layer above that, not a substitute for it. As OpenAI’s team frames it, the bottleneck shifts from writing code to managing agentic work, and the orchestrator is what makes that shift practical.